In 2026, artificial intelligence will help companies work faster and build better products. But it has also created a massive $4.88 million blind spot for the average business.

You probably added several new AI tools to your network this year. Your traditional security tools are completely useless against these specific attacks. A standard firewall cannot stop a poisoned data file. This reality makes secure AI adoption incredibly difficult for most IT teams.

You need a structured plan for enterprise AI risk management of these 10 proven AI security strategies; only the top 15% of security teams use these specific methods to defend their infrastructure. These strategies actually work.

#1. Find and Fix “Shadow AI” in 3 Steps

Your team is likely using AI tools right now without telling you. We call this “Shadow AI.” Employees use these unauthorized LLMs to write emails or write code faster. But this puts your company data at massive risk.

In fact, 65% of AI tools operate without IT approval. IBM data from 2025 shows Shadow AI causes 20% of all data breaches. Remember the 2025 OmniGPT breach? That single mistake exposed 34 million lines of private company chats.

AI Security Posture Management (AI-SPM). AI-SPM tools scan your network specifically for hidden AI usage. They give you the visibility needed for proper Shadow AI governance.

#2. The 5-Minute Fix for Prompt Injection Attacks

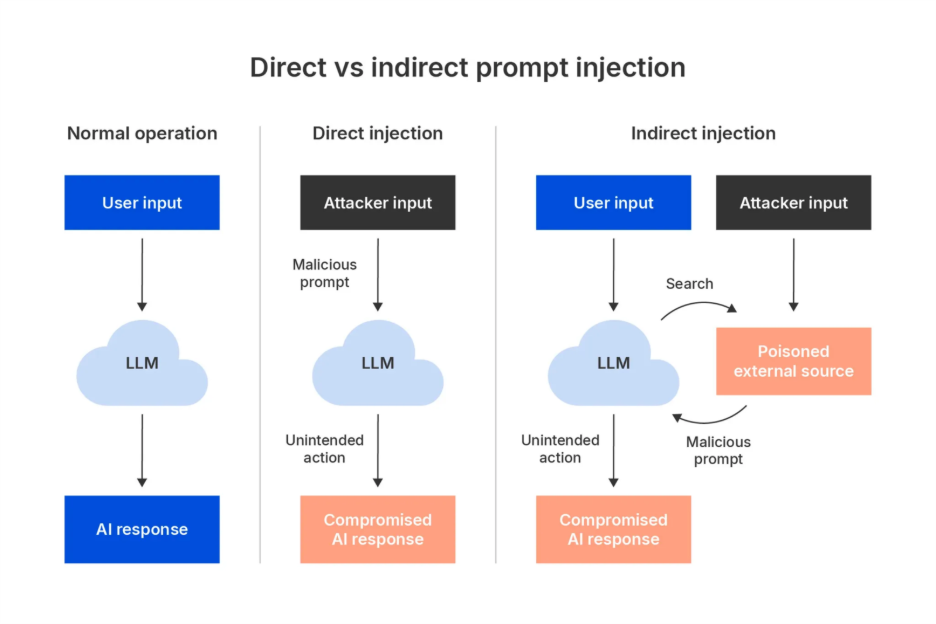

Hackers use a trick called prompt injection to break your AI. They hide secret commands in the text they type. The AI reads the text and obeys the hacker’s hidden rules instead of yours. This can happen directly from a user or indirectly through files the AI reads.

According to the OWASP Top 10 for LLMs 2025 (LLM01:2025), this is the number one threat to your systems. Shockingly, 94.4% of untested AI models fall for direct prompt injections.

Think of an AI gateway as a smart bouncer for your AI model. It sits between the user and the AI. It reads both the user’s prompt and the surrounding context. It performs strict input sanitization to scrub away hidden hacker commands. Only clean text reaches your actual LLM.

#3. Why Your Annual Pen Test Misses AI Threats

Hackers use completely different methods to trick AI systems. They use model evasion to bypass your safety filters. They also trick the AI into leaking private data. You must fight back with continuous AI red teaming.

AI red teaming means acting like a hacker to attack your own AI. You run adversarial simulations to find out exactly where your model fails. This reveals hidden security holes before the bad guys find them. In fact, AI-specific red team exercises discover 3.2 times more security risks than standard pentesting.

You cannot just do this once. Every time you update your AI, you must test it again. It must become an automatic part of how you build and release software. Making this testing continuous ensures your defenses stay strong against fresh attacks.

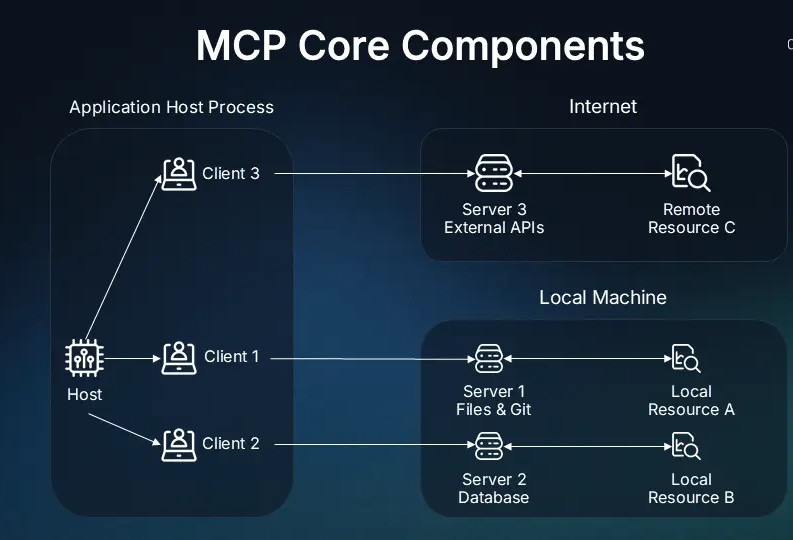

#4. How to Stop Tool Poisoning in Your Model Context Protocol (MCP)

AI models need access to your internal databases to be useful. They connect to your systems using the Model Context Protocol. But this connection creates a massive security hole.

Hackers can trick this connection. This is called tool poisoning. They hide bad instructions in the data the AI reads. The AI then runs unauthorized commands directly on your systems. A hijacked AI can delete files or shut down your entire network.

Add MCP guardrails to check every single command. These guardrails sit between the AI and your database. They review the command before it actually runs.

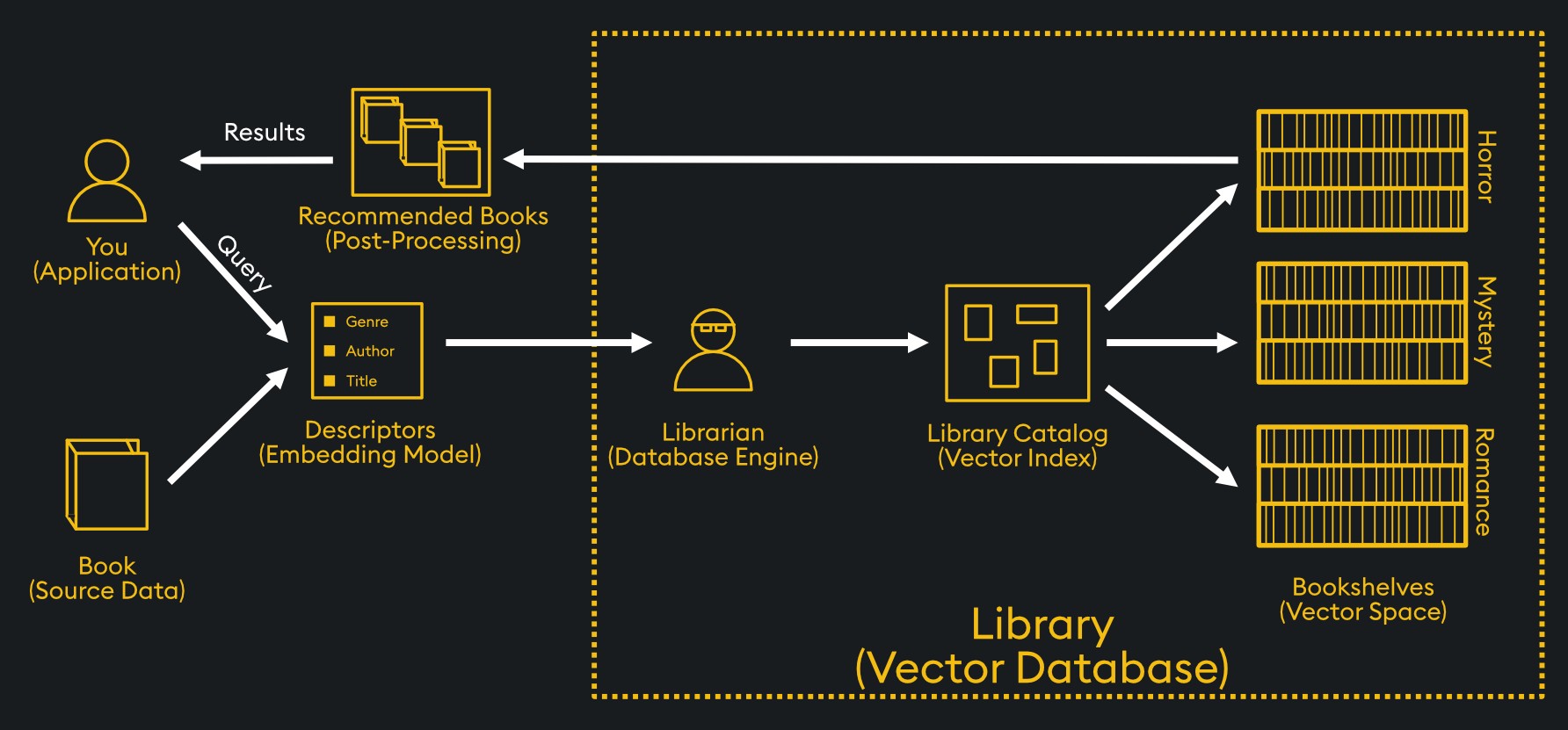

#5. Prevent RAG Data Poisoning in Vector Databases

Your AI probably reads from your private company files to answer employee questions. This setup is called Retrieval-Augmented Generation (RAG). Attackers know this. They try to sneak bad data into your files. They poison the vector databases your AI trusts.

When the AI reads this bad data, it learns the wrong facts. It then gives your employees false or harmful answers. The OWASP Top 10 lists this as a major threat (LLM04:2025 and LLM08:2025).

First, lock down who can edit your company documents. Second, check your data constantly. Use math tools like cryptographic hashing to lock your files. This proves hackers have not secretly changed your source data. Third, perform embedding integrity checks every day.

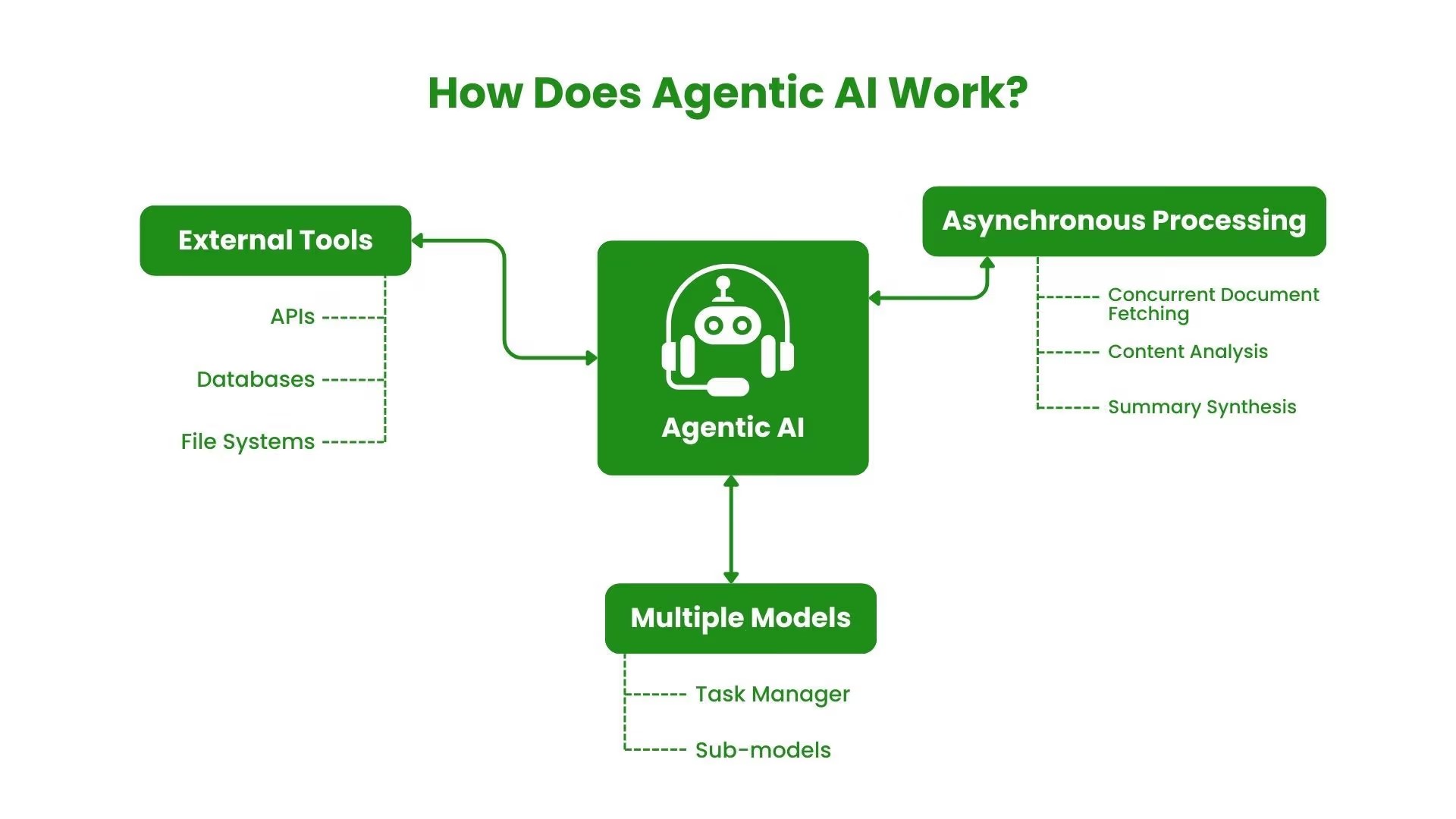

#6. You Must Limit “Excessive Agency” in Agentic AI

AI is no longer just a simple chat window. Agentic AI actually does tasks for you. It can send emails, buy software, or change system settings on its own. Giving AI too much power is highly dangerous. We call this excessive agency.

In early 2025, security tests checked these multi-agent systems without safety limits. Hackers achieved a 100% compromise rate. They easily hijacked the agents and stole data.

You must restrict what these smart agents can do. Give them only the exact API access they need to do their specific job. If an agent only needs to read emails, do not permit it to delete them.

#7. Stop AI from Leaking Private Data

Hackers know how to exploit this memory. They ask the AI tricky questions to force it to repeat your private information. They can steal customer names, addresses, and credit card numbers right from the chat window.

You must limit what the AI learns in the first place. Practice strict data minimization. Only feed the AI the data it absolutely needs to work. Remove all sensitive details before the AI ever sees the files.

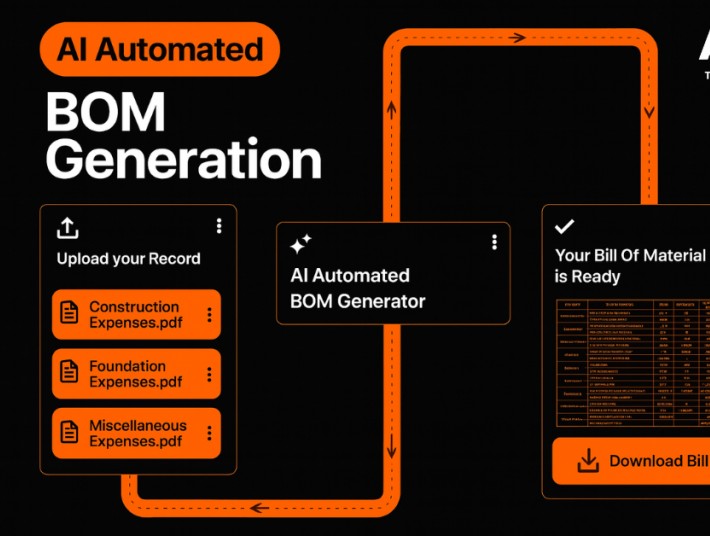

#8. Protect Your Supply Chain with an AI-BOM

Force your software vendors to provide an AI-BOM. This stands for Artificial Intelligence Bill of Materials. It lists every dataset, base model, and code library they used to build their third-party AI tools.

It lets your security team check the vendor’s work for known flaws. In fact, the EU AI Act makes this kind of reporting mandatory by mid-2026. Refuse to buy from vendors who hide their ingredients.

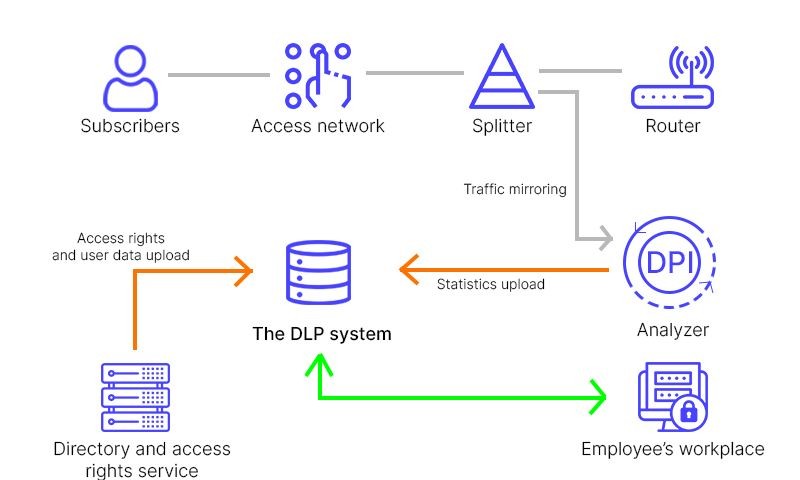

#9. You Need Dual-Layer Guardrails to Stop Data Loss

Employees make mistakes every day. Right now, 2.6% of all employee prompts contain private company data. Workers accidentally paste secret source code or financial numbers right into public AI tools.

Block any prompts that contain passwords or private numbers. Second, perform output sanitization before the AI’s answer reaches the user. This stops the AI from accidentally sharing secrets with the wrong employee.

You can use a second, smaller AI to watch the main AI. Connect this watcher to your data loss prevention (DLP) software. This dual-layer system catches bad inputs from humans and bad outputs from machines.

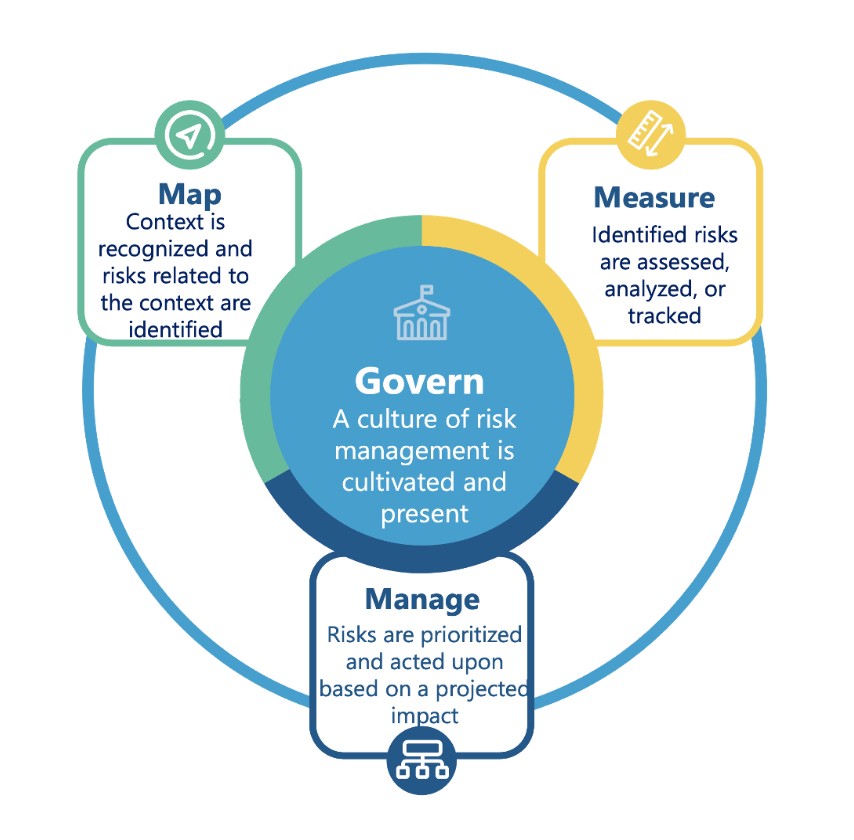

#10. Manage Risk with the NIST AI Framework

Stop guessing about AI safety. You need a real plan for enterprise AI risk management. The best tool for this is the NIST AI RMF. This is a clear, step-by-step framework built by government experts. It moves you away from random security fixes and gives you a structured plan.

The framework has four main parts. First, govern your AI rules and build a clear chain of command. Second, map out exactly where you use AI and identify the specific risks. Third, measure how well your AI works and track its safety metrics. Finally, manage those risks every single day.